I Hacked My Smart Lights... And Things Got Way Out of Hand.

TLDR: A Python-powered toolkit to take over Philips Wiz smart lights that includes stock market, audio & music visualization, DIY Ambilight video sync

You know how weekend projects go? You start with a tiny, simple idea. "I'll just build this one little thing," you tell yourself. Five hours later, you're 20 browser tabs deep into obscure documentation, some reddit posts related to the project and endless conversation with claude or chat gpt about building it out.

Well, my weekend was kind of like that. It all started with my Philips Wiz smart lights. They're fine. You open the wiz connect app, you tap a button, the light changes. But "fine" is boring. I wanted lil more control. I wanted to make them do things the app designer never dreamed of.

So, I got bored and did what any sane developer does: I fired up a network sniffer.

The "Aha!" Moment

After few minutes, I found the holy grail. These Rs.500 smart bulbs... they don't have a complicated, encrypted API.

They just listen for raw JSON commands sent over UDP to port 38899.

All you have to do is pass this JSON:

{"method": "setPilot", "params": {"r": 255, "g": 0, "b": 0}}

That's it. That's the key to access the lights. My weekend was gone. It was time to build. Thanks to Aleksandr Rogozin and his blog for the quick head start: Here is the Link

Level 1: The "Simple" API

First things first. I wrote a simple Python class (wiz_control.py) to discover the lights and send commands. Turn on, turn off, set color. Easy.

Then, I wrapped it in a FastAPI server (api_server.py). Now I had web endpoints. I could control my lights from my browser, from a script, from anywhere. I even threw together a quick index.html for a basic UI.

I'd built my own, better Wiz app. A solid project. I should have stopped there but I did not stop there.

Level 2: The Escalation (My Lights Can Dance)

I was looking at my projector, music playing, and it hit me.

"I have programmatic control of light. I have a microphone. I know what must be done."

I programmed audio_visualizer.py feature.

The idea: capture audio from my mic, run a Fast Fourier Transform (FFT) on it in real-time, and split the audio into frequencies.

Bass (20-250Hz) → Mapped to the Red channel.

Mids (250-4000Hz) → Mapped to the Green channel.

Treble (4000-20000Hz) → Mapped to the Blue channel.

I ran the script, put on some music, and... it was insane.

The lights were reacting to each music beasts. Bass drops made the room flash deep red. Cymbals sent pulses of blue. It was alive.

But then I was like Why just one mode?

I built a

pulsemode: The light stays a warm white, but the brightness pulses with the music's amplitude.I built a

strobemode: Aggressive white flashes on every single beat. Perfect for EDM.I built a

multimode: If you have multiple lights, one light becomes the bass, one becomes the mids, and one becomes the treble. It's bonkers.

Level 3: The "Next-Level" Insanity (DIY Ambilight)

At this point, it was probably around 11PM. I was running on pure adrenaline and dangerously high levels of "what if..." I was parallely brainstorming with ChatGPT and Claude then I got one more idea.

"Wait... what if the lights reacted to videos?"

Philips and others charges a fortune for this "Ambilight" feature. I was pretty sure I could build it with OpenCV and my video_visualizer.py script.

And I did 😎

I wrote a new analyzer that loads any video file (mp4), reads it frame by frame, and does two things:

Analyzes the color: I built a

dominant_colormode that uses K-means clustering to find the main color of the entire frame and sends it to the lights.Analyzes the edges: I built a true

edge_analysismode that samples only the pixels on the outer border of the frame, just like a real Ambilight.

I put on a teaser from this upcomming movie called Ramayana. An explosion happened on screen, and my entire room flashed and now are in sync. A scene with arrow turned my walls a deep, pulsing blue.(~93ms latency)

This was, without a doubt, one of the coolest thing I have ever built among ~150 projects.

I even made a hybrid mode that takes the color from the video and the brightness from the video's audio track. It's the ultimate sensory experience.

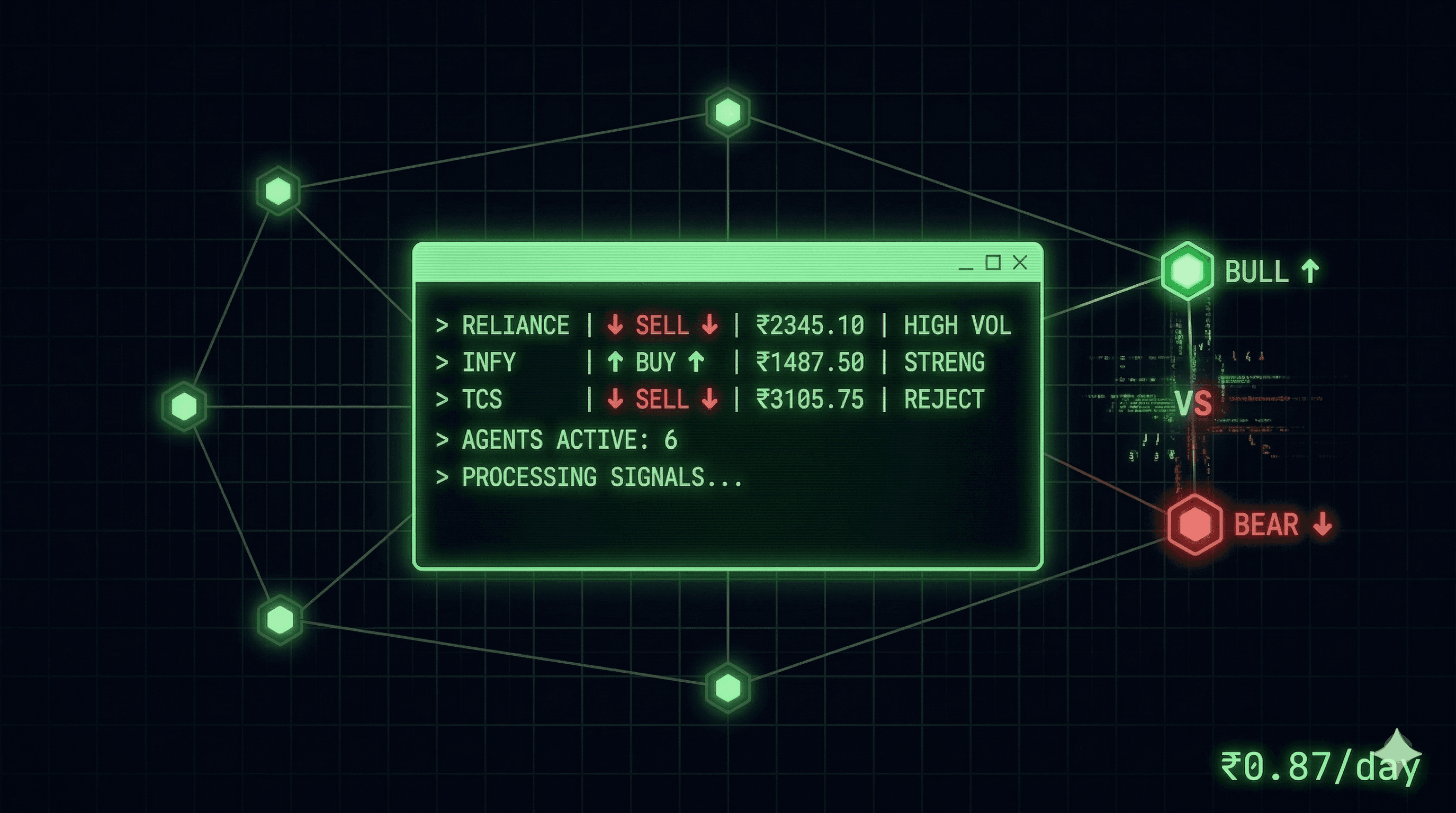

Level 4: The Final Descent (My Room is a Stock Ticker)

This is the part I can't really explain. This is where the project went from a "cool personal hack" to "a full-blown obsession."

Recently, a post from Pankaj Tanwar (a builder I hugely admire) went viral. He had hooked his lights up to his Zerodha portfolio with RPi4.

I saw that and thought, "That's it. That's the final level."

I had to do it. But I use Groww.

I figured, 'What's the harm in asking?' I found Vamsi on the Groww team, sent him a DM, and showed him my music and video visualizers.

He loved it. And then he did something legendary: he hooked me up with free, exclusive access to the official Groww Trading API.

Pankaj asked me exploit it immidiately. Now I had to build it.

The logic is simple:

Fetch my stock's price (let's say

GROWW).Fetch the opening price for the day.

If

current_price > opening_price, my lights turn GREENish.If

current_price < opening_price, my lights turn REDish.The brightness of the light is tied to the magnitude of the change. A +2% day is brighter than a +0.5% day.

My room is now a real-time trading floor indicator:

I can just sit here, and if my peripheral vision suddenly turns a deep, angry red, I know I'm losing money. This is either genius or the worst idea I've ever had for my mental health. (btw I don’t trade - Mutual Funds sahi hai)

I even built a stock_replay.py that takes historical data and replays the entire market day at 20x speed, so u can visualized as a frantic green-and-red light show.

The Aftermath

So, yeah. My simple weekend project to build a custom light switch spiraled into a full-sensory data visualization platform that reacts to music, movies, and the Indian stock market.

All because a smart multi colour light bulb had an open UDP port.

It's been a wild ride, and the project is already getting some love and sparking conversations on Twitter and r/developersIndia.

You can find all the code from the simple API to the stock ticker on my GitHub (myselfshravan/wiz-hack).

If you have made it till here then great. Thanks for reading and stay tuned for whats coming next…

It could be a sound box playing music based on the stock value or a simple stock tradebook analyser by AI and some cool browser entensions.